There’s a quiet frustration constructing inside a number of corporations proper now.

They’ve experimented with AI, constructed prototypes, and in lots of circumstances shipped one thing that appears spectacular in a demo. And but, when it comes time to depend on it, to place it in entrance of shoppers, or to belief it inside actual workflows, issues begin to break.

On the inaugural York IE AIConf in Ahmedabad, Ashish Patel, Senior Principal Architect for AI, ML & Information Science at Oracle, put phrases to what many groups are experiencing: “Demos are simple. Reliability is tough.”

That line captures the hole between experimentation and execution, and it factors to a deeper reality. AI doesn’t fail as a result of the fashions aren’t ok. It fails as a result of the methods round them aren’t.

The 90/10 Lure

Most groups fall into what Ashish described because the 90/10 lure. Ninety p.c of the hassle goes into constructing one thing that works in a managed setting, whereas the ultimate ten p.c, the half that makes it dependable, scalable, and manufacturing prepared, is the place issues start to unravel.

The difficulty will not be intelligence. It’s structured. Static workflows break after they encounter edge circumstances, and methods typically lack reminiscence, error dealing with, and correct device integration. What appears like a sensible system in a demo shortly reveals itself to be fragile in the true world.

Much more importantly, groups are likely to misdiagnose the issue. They assume the mannequin is the bottleneck, when in actuality, the bottleneck is the shortage of system capabilities round it.

That perception shifts the dialog from mannequin choice to system design. And that’s the place the true work begins.

Why Higher Fashions Don’t Repair the Drawback

If the mannequin will not be the bottleneck, then what’s? The reply is context.

There’s a frequent perception that higher fashions produce higher outcomes. It feels intuitive. Larger fashions, extra coaching knowledge, and extra intelligence ought to result in higher solutions. However in observe, efficiency will not be pushed by intelligence alone. It’s pushed by how effectively the system informs that intelligence.

As Ashish defined, a mannequin’s output is simply as dependable as the precise, updated knowledge supplied within the immediate. With out context, even essentially the most superior fashions fail in easy methods. They don’t perceive your online business, your knowledge, or your constraints, so that they fill within the gaps. They usually do it convincingly.

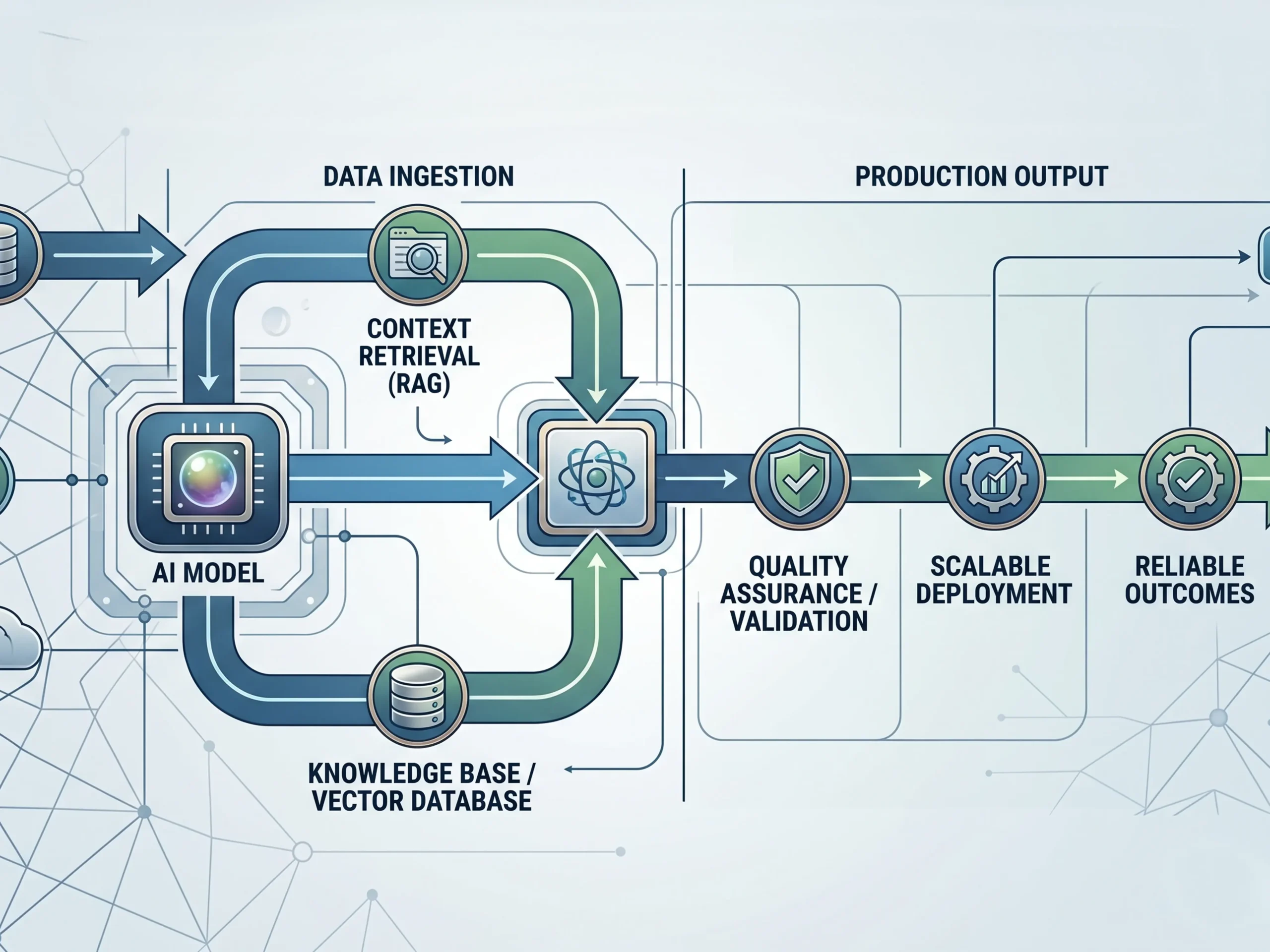

This is the reason so many groups wrestle with accuracy. They put money into high-quality tuning, immediate engineering, and new instruments, when the true difficulty is that the system will not be offering grounded, related info. Ashish provided a sensible rule that cuts by the noise: use RAG first. Ninety p.c of agentic failures are context associated, not habits associated.

Which means your retrieval layer issues greater than your mannequin alternative. Information high quality and accessibility aren’t backend issues. They’re the muse of efficiency.

Hallucination Is a Design Drawback

This additionally reframes one of the vital talked about challenges in AI: hallucination.

Most groups deal with hallucination like a glitch, one thing that sometimes occurs and must be caught after the very fact. However that framing misses the purpose. Rubbish in, rubbish out. Fallacious context results in unsuitable output.

Fashions are designed to be useful. After they lack info, they fill within the gaps with believable solutions. They aren’t malfunctioning. They’re working precisely as designed. The failure is within the system that surrounds them.

There are three patterns that present up constantly. First, poor context, the place the system can’t retrieve the suitable info. Second, no validation layer, the place outputs are by no means checked earlier than getting used. And third, weak structure, the place there isn’t any redundancy or second opinion inbuilt.

Fixing hallucination will not be about writing higher prompts. It’s about constructing higher methods by stronger retrieval, inbuilt validation, and constructions that permit outputs to be examined earlier than they’re trusted.

From One Agent to Many

As groups start to deal with these challenges, the structure naturally evolves. Most begin with a easy concept: construct one highly effective AI agent that may deal with all the things. It’s a logical start line, however it shortly turns into limiting.

As duties develop extra complicated, a single agent runs into cognitive overload. It’s accountable for an excessive amount of context, too many choices, and too many duties without delay. As that load will increase, accuracy drops and errors develop into extra frequent.

The answer is to not construct a wiser single agent. It’s to construct a system of brokers.

In a multi agent structure, every agent has an outlined position. One researches, one other analyzes, one other executes, and one other critiques. As a substitute of 1 generalist making an attempt to do all the things, you create a workforce of specialists. This construction introduces one thing most AI methods lack at the moment: verification.

As Ashish famous, in a multi agent setup, one agent can double examine the work of one other. One agent produces an output, one other critiques it, and a 3rd synthesizes the outcome. The system turns into extra dependable not as a result of any single mannequin is ideal, however as a result of the system is designed to catch errors.

That is the shift from remoted intelligence to coordinated intelligence, and from outputs to outcomes.

What Really Separates Programs That Work

By the top of the session, the excellence turned clear. There are two varieties of AI methods being constructed at the moment.

The primary are experimental. They’re spectacular in demos however brittle in manufacturing, counting on prompts, linear workflows, and finest case assumptions. The second are structured. They’re designed for actual world circumstances, incorporating reminiscence, retrieval, validation, orchestration, and resilience.

These methods are constructed to get well when one thing breaks, not simply to work when all the things goes proper. That’s the distinction between constructing one thing that appears like AI and constructing one thing that truly works.

The Backside Line

AI isn’t just a mannequin downside. It’s a methods downside.

The groups that win on this subsequent part won’t be those chasing the newest mannequin launch. They would be the ones investing in structure, context, retrieval, validation, and coordination. They may transfer past demos and construct for reliability.

As a result of ultimately, the objective is to not create one thing that appears clever. It’s to create one thing that may be trusted. And that solely occurs when the system is designed to help it.

To remain up-to-date on all upcoming York IE occasions, comply with us on LinkedIn.